Today, one of my colleague reached out to me that one of her database has gone into SUSPECT mode. She explained that while she was deploying the db changes, there was an unexpected shutdown happened on the server. While rebooting the server, it is observed the database has gone into SUSPECT mode.

Primary reasons when an SQL Server Database is marked in Suspect Mode

There may be many reasons for a db is getting into a SUSPECT mode. This is the worst case for any database can get as this would lead the database unusable.Here are few reasons I could think immediately, but there could be many other reason, feel free to add in the comment section if I miss anything.

SQL Server Crash

Database Files are inaccessible

Improper shut down of SQL Server System

Sudden Power Outage

Hardware Failure etc.

Steps to Fix the SQL Server Database Suspect Mode Error

There are many articles on how to repair a SUSPECT db out there on a google search.

Bring Database in EMERGENCY MODE

Perform Consistency Check – DBCC CHECKDB

Bring the Database in SINGLE_USER Mode with rollback immediate

Take a Full Backup of the User Database

Execute DBCC CHECKDB WITH REPAIR ALLOW DATA LOSS (Note: It might result in Data Loss)

Bring the Database in MULTI_USER Mode for normal operations

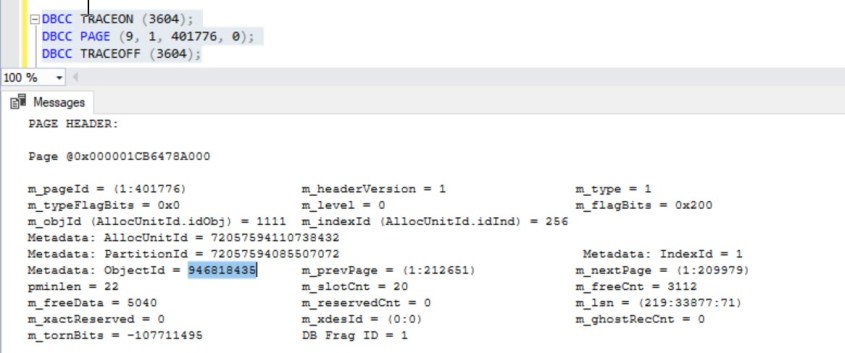

Now, Let us look at our specific issue. Firstly, we tried to get this database in EMERGENCY mode with the below T-SQL.Unfortunately, we did end up with an exception given below which is clearly saying that this db has got a severe consistency issue which cannot be used further.

ALTER DATABASE dbname SET EMERGENCY

User does not have permission to alter database ‘dbname’, the database does not exist, or the database is not in a state that allows access checks.

How to drop SUSPECT database?

As we identified that this database has severely corrupted and it cannot be repair nor recovered, there is no way other than dropping the database. If you have backup of this database, you can always do restore from the latest valid backup (even up to the point in time). But unfortunately, in our case, it will not even allow you to drop and it would end up with the above same error message for DROP as well.

So, how do we drop this db? Its bit tricky and needs some outage of SQL Server (since one of the step is stopping the service).

Stop the SQL Server service.

Rename the file (MDF or LDF or both) of this database.

Start the SQL Server service.

Drop the database.

Caveat: The above method is recommended on non- production environments generally.

I’d like to grow my readership. If you enjoyed this blog post, please share it with your friends!