Definition of Data Cleaning

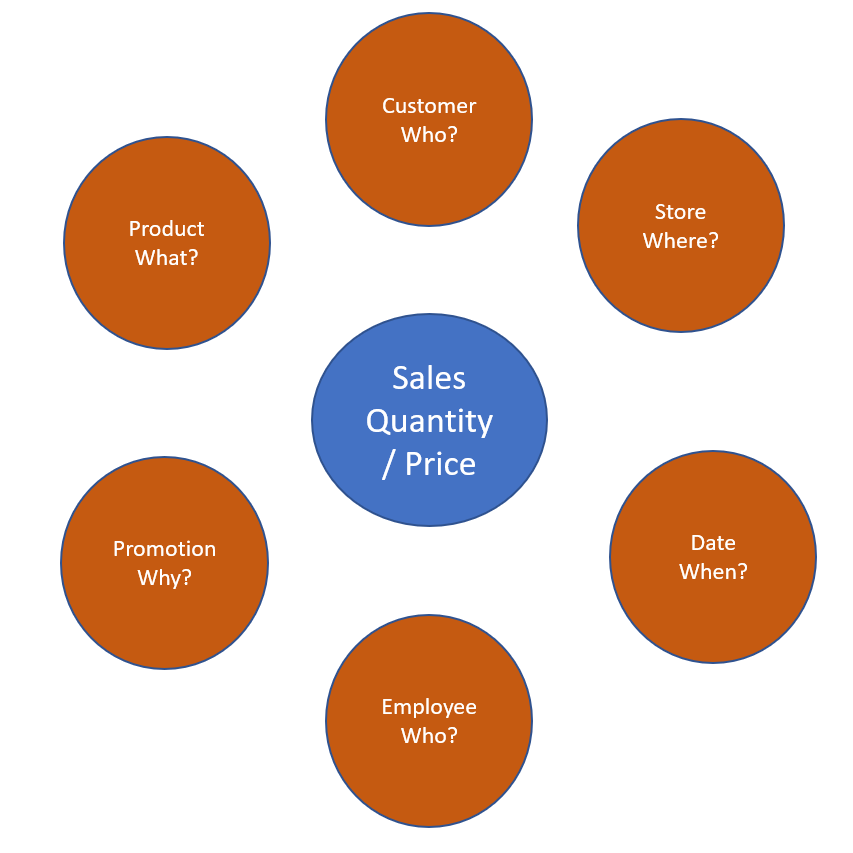

Data cleaning is a process of preparing data for analysis by removing or modifying data that are incorrect, duplicated, incomplete, irrelevant and improperly formatted. As per industry experts, most of data analysts/scientists spend their 70% effort in Data cleaning processes. We can refer data is good if that follows the below characteristics: Validity, Accuracy, Consistency, Completeness and Uniformity.

Data Cleaning Techniques

Remove bad characters

Removing unnecessary whitespaces is one of the most important activity in Data Cleaning Process.

| Tech | References |

| SQL Server | FN_RemoveBadCharacter |

| Excel | TRIM |

Remove blank/null data

Blank data is a concern for all analysts as they cannot arrive to a decision making system due to unavailability of right or required information. We may need to make our system intelligent enough to handle null values.

| Tech | References |

| SQL Server | Default constraint in SQL Server |

Remove Duplicates

Duplicates are always a pain area for Data Analysts. This could lead to a wrong understanding or perception of data that ends up with a bad conclusions. Few of reasons for duplicate data are redundant processing due to any failures, absence of exception handling, multiple manual entries etc. In database management ecosystem, we can enforce UNIQUE constraint to avoid duplicate entries into column(s). As an another option, we can identify the duplicate values and delete those dirty data(this can be scheduled to run frequently).

| Tech | References |

| Python | Identify duplicates in python |

Typographical Errors

Typo errors are another important reason for dirty data. There are different types of typographical errors that can cause difficulty for many analysts and scientists.

Improper Kerning/leading: The spacing between letters or words are always a concern. This can lead to confusions and unclear situations. For eg. the word “away” becomes “a way” without a proper Kerning can cause totally different meaning. As an another note, leading is the space between two lines, that is also important for a reading aspect. Leading space cannot be a worrisome, but that improves the reading capability.

Capitalization inconsistencies

These types of errors are causing when a writer does not capitalize a word when it is required or otherwise. For eg. Name of a person, country, state, month etc. needs to be denoted with a capital letter. Few of common rules are given in this post.

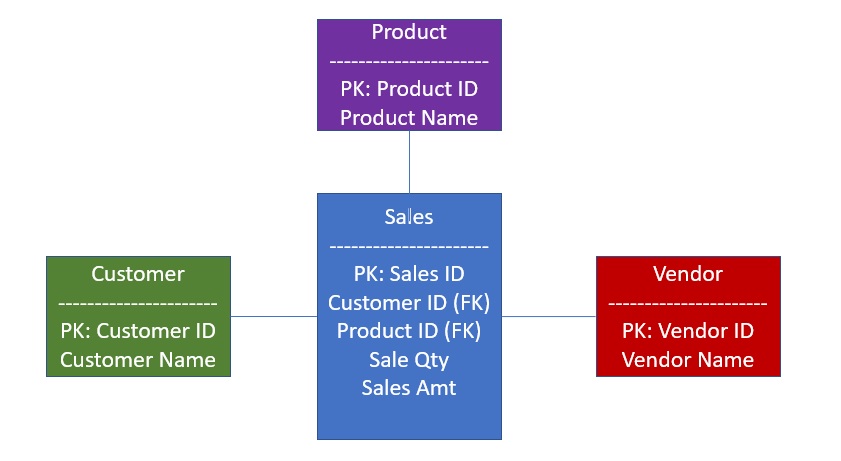

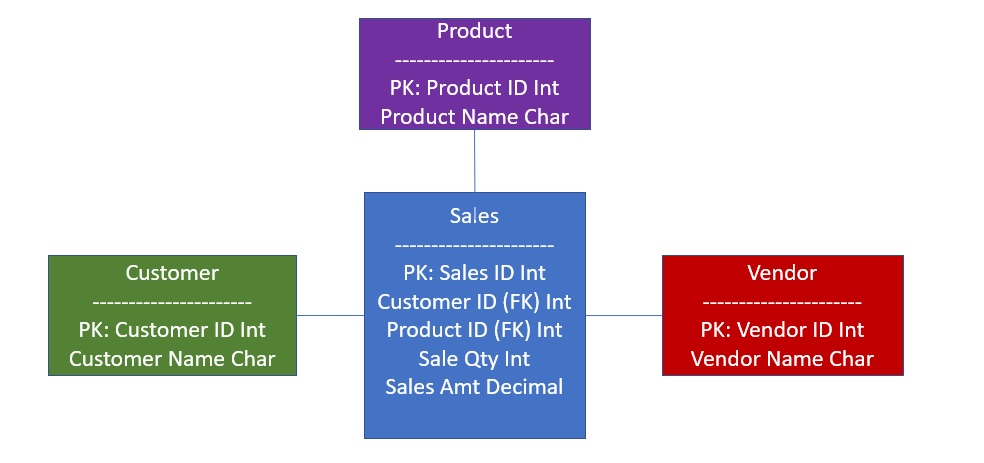

Data Type Inconsistencies

Data is stored depending on the type of field defined. If the source and target data types are different, there could be missing of complete data, for eg. decimal to integer. Currently many tools supports features to automatically identifying the data type fro unstructured data.

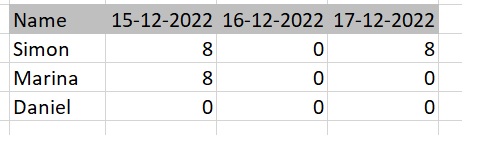

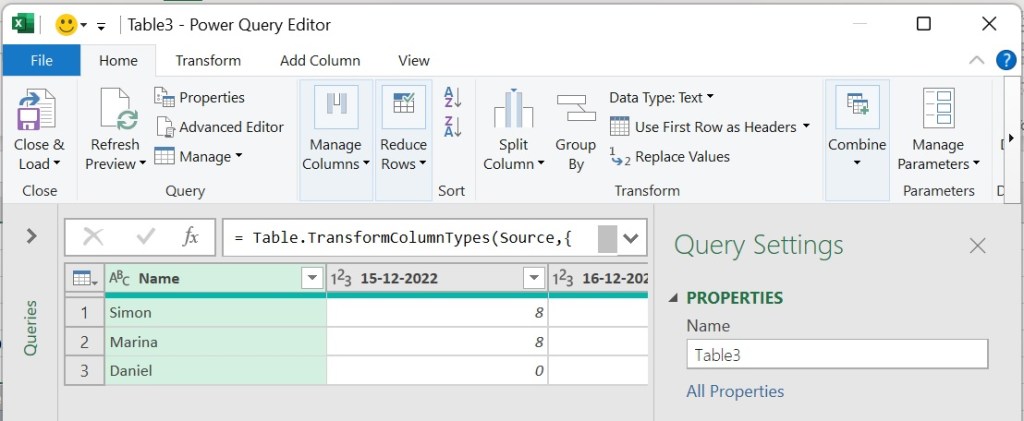

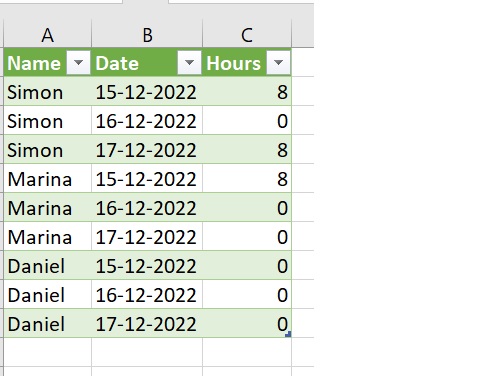

Formatting Irregularities

Formatting issues with source is always a problem for many data engineers/analysts during data preparations, transformations etc. This post explains some of issues in Excel formatting.

Advantages of Data Cleaning

At the end of the data cleaning process, the system will be benefitted of the following:

- Business qualified and quantified data

- Improved Decision making

- Revenue Booster

- Cost Effective

- Increased Productivity

Disadvantages of Data Cleaning

- Data cleaning can eliminate outliers sometimes that can cause missing of some insights.

- The process is very time consuming and expensive.

- Automation of cleaning process needs to be drafted with utmost care and validations.

If you enjoyed this blog post, please share it with your friends!